Reducing TTFT by CPUMaxxing Tokenization

Tokenization is a silent bottleneck in agentic LLM inference. Crusoe and NVIDIA Dynamo built fastokens, an open-source Rust BPE tokenizer that delivers 9.1× average speedup over HuggingFace and up to 40% faster TTFT on long-context workloads.

The tokenization bottleneck

Tokenization is a critical yet often overlooked step in the LLM inference pipeline. In agent-based workloads, prompts grow large as context accumulates from tool calls, retrieved code or files, execution results, conversation history, and intermediate reasoning. Every request must be tokenized before the model can process it, and as prompt sizes increase, tokenization becomes a non-trivial portion of the time to first token (TTFT).

In latency-sensitive deployments, TTFT must be minimized to maintain a good user experience. Analysis of real customer traffic shows that many applications, particularly agent-based systems, operate with very large prompts while maintaining high cache hit rates. We frequently observe prompt sizes exceeding 50K tokens with cache hit rates above 90%. Under these conditions, tokenization can account for a significant portion of the total TTFT.

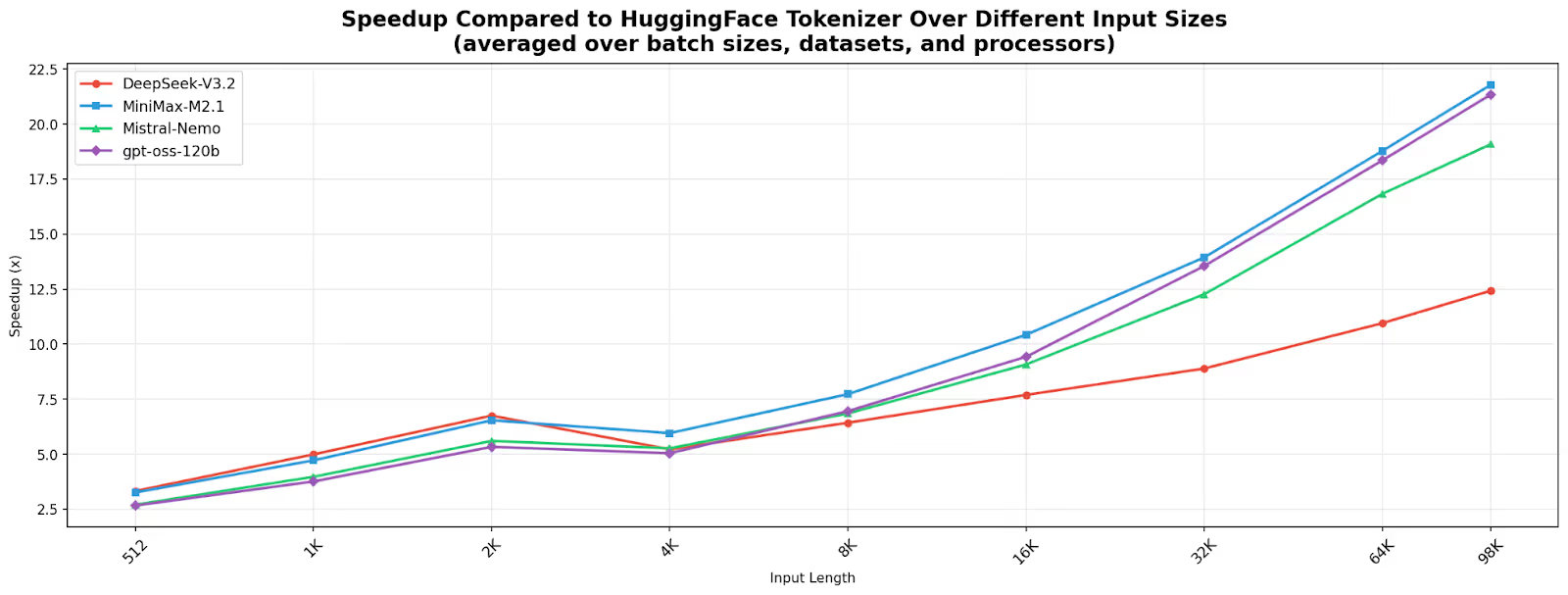

To address this bottleneck, we worked closely with the NVIDIA Dynamo [1] team to develop fastokens, a drop-in BPE tokenizer implemented in a high-performance Rust engine. Across a benchmark covering four models (DeepSeek-V3.2, MiniMax-M2.1, Mistral-Nemo, and GPT-OSS-120B), two datasets (LongBench and ShareGPT), three CPU architectures, and input lengths from 512 to 100K tokens, fastokens achieves a 9.1x average speedup over the HuggingFace tokenizer. The gains increase with prompt length. For prompts above 50K tokens, typical in agent workloads, the average pure tokenization speedup reaches 17.4x with a peak of 31x, and translates into end-to-end TTFT improvements of up to 40% in real inference workloads that we tested.

fastokens is open source and integrates with NVIDIA Dynamo and SGLang, and supports many popular models including NVIDIA Nemotron, DeepSeek, Qwen, GLM, MiniMax, and Mistral.

Core optimizations

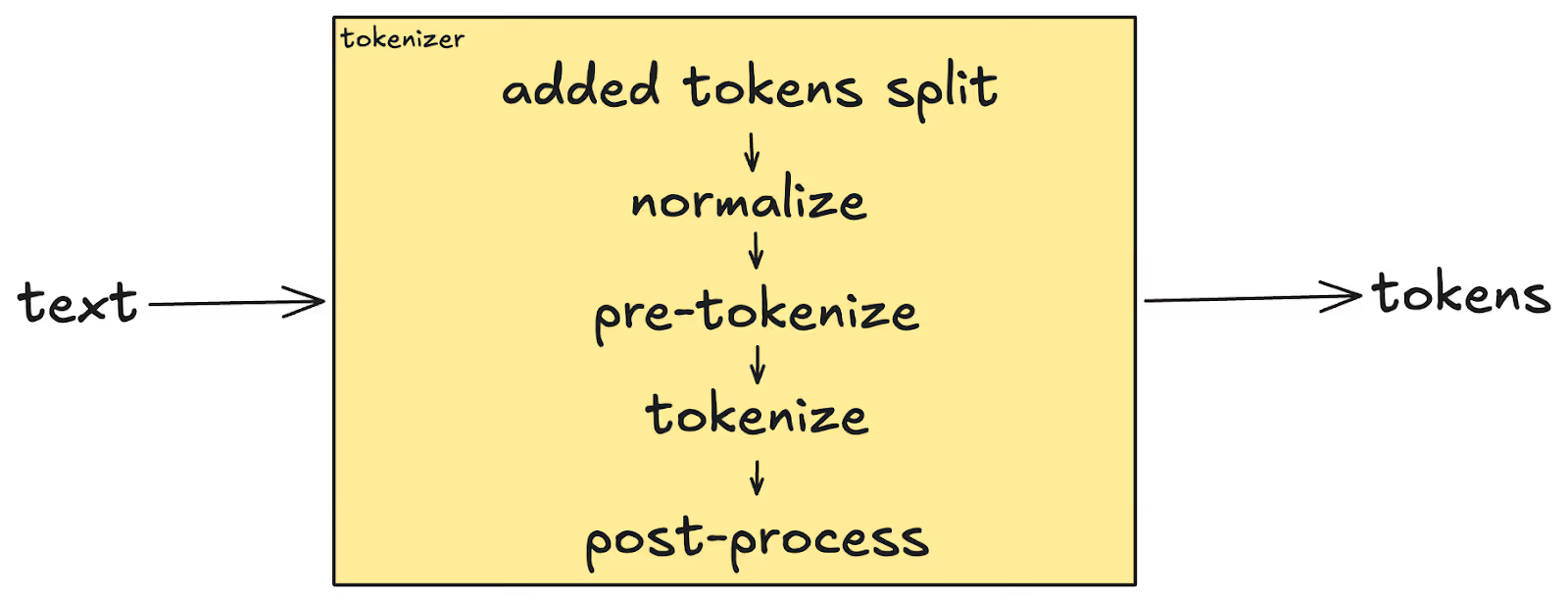

Tokenization mainly contains 5 steps:

- Added tokens split: The input is scanned for special literal strings (e.g. <|endoftext|>, <s>). Matched spans are assigned their token IDs immediately, while the remaining text segments continue through the pipeline.

- Normalize: Each text segment is Unicode-normalized (typically NFC) so that equivalent representations - such as a precomposed `é` vs. `e` + combining accent - collapse to a single canonical form.

- Pre-tokenize: A regex splits normalized text into smaller chunks (e.g. along word and punctuation boundaries). For byte-level models, each byte is also mapped to a dedicated Unicode character.

- Tokenize (in our case - BPE): Byte Pair Encoding merges adjacent tokens according to a learned priority table, starting from individual characters (or bytes) and progressively combining them into larger subwords until no more merges apply.

- Post-process: Template-defined special tokens (BOS, EOS, CLS, SEP, etc.) are inserted at the appropriate positions in the final ID sequence.

Many optimizations had to come together to enable the fast tokenizer. The top three are:

CPUMaxxing

Current tokenization implementations often leave much to desire when it comes to fully utilizing the CPU, and we made sure to squeeze every last drop. In modern superchips such as the NVIDIA Grace CPU, the amount of cores can reach triple-digits, and using them to the max is not simple.

We did it in two places:

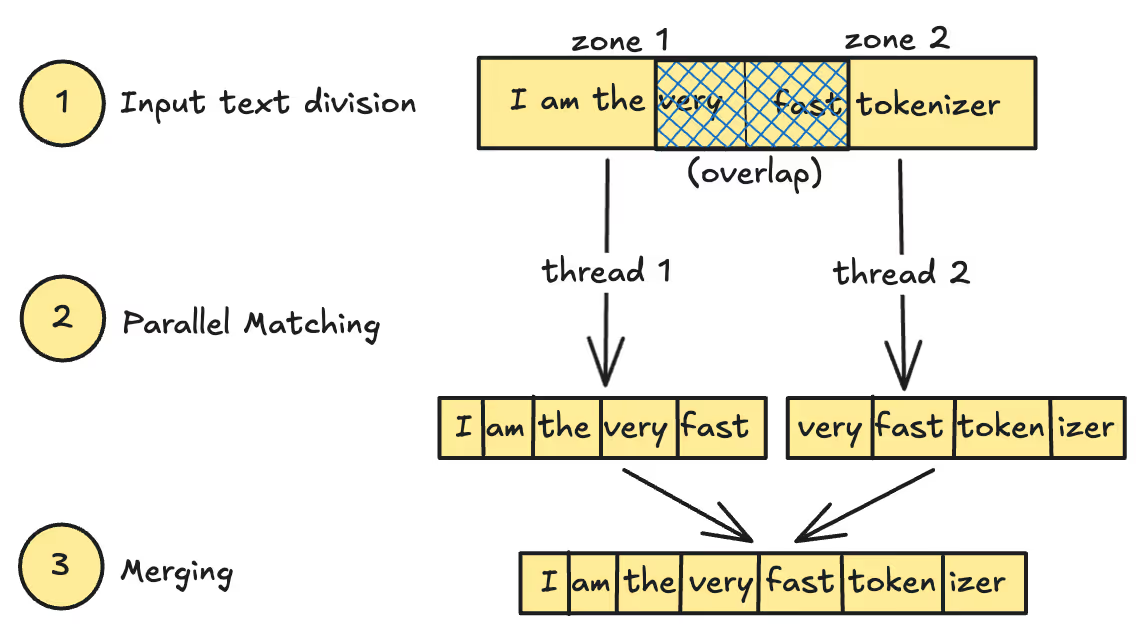

- Pre-tokenize: For large inputs, the text is divided into authority zones (one per thread) with 1 KB of overlap at boundaries. Each thread matches its zone independently; results are merged by discarding matches whose start position falls outside the thread's authority zone. The overlap ensures that patterns straddling a boundary are found in full by at least one thread, and the authority rule deduplicates them deterministically. This turns a fundamentally sequential regex scan into a parallel one.

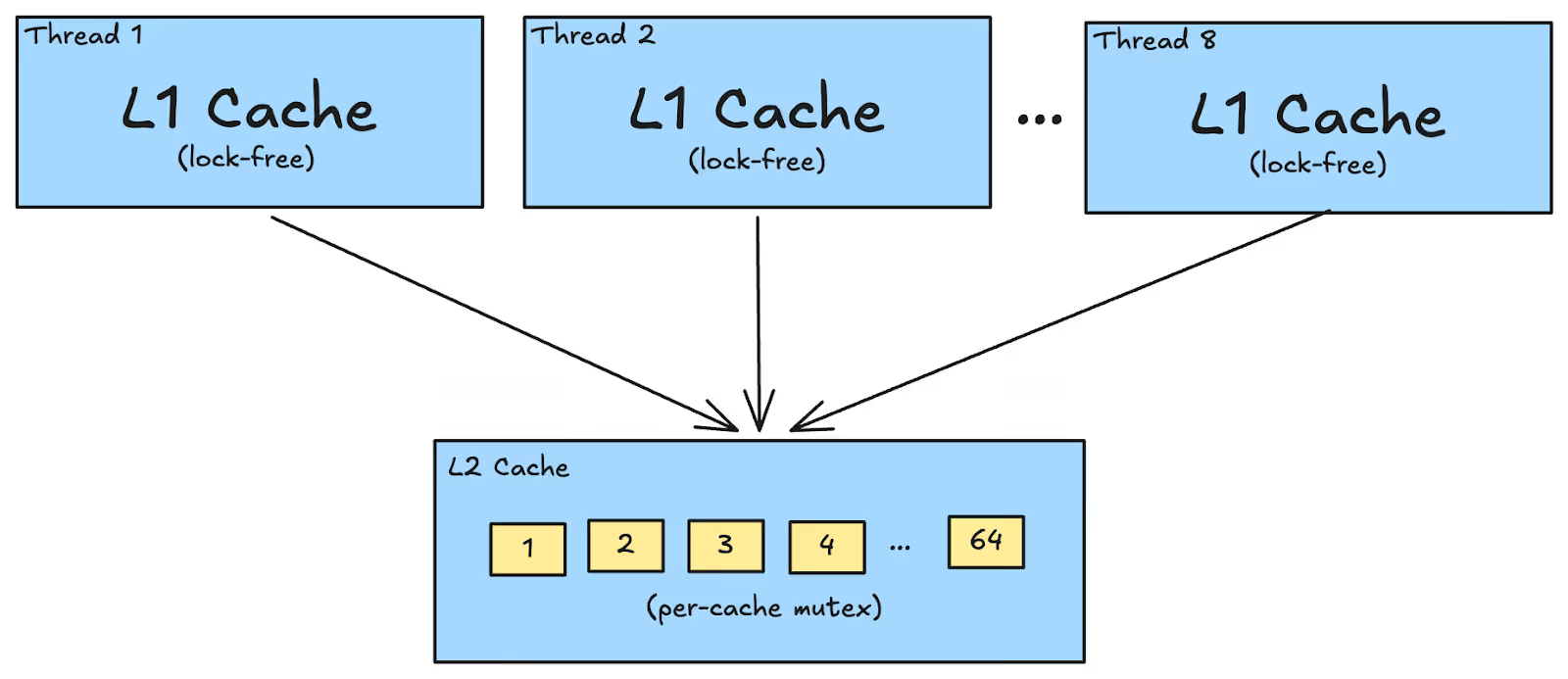

- Tokenize: Independent pre-tokenized chunks are BPE-encoded in parallel using a dedicated, fixed-size thread pool (capped at 8 threads). Each thread maintains a two-level cache: L1 is a thread-local open-addressing hash table (64K fixed slots, lock-free), and L2 is a globally shared cache split across 64 independently-locked shards. An L1 hit - the common case for repeated subwords within a single thread's workload - requires zero synchronization. An L1 miss checks L2 - and an L2 hit promotes the entry back to L1 for future access. When both caches are a miss, the sequence is re-calculated, and cached in L1 and L2. Reusing the same threads keeps L1 caches warm, delivering near-linear speedups on multi-core machines.

Dynamic memory

Good tokenization hinges on saving every nanosecond possible, and every dynamic operation counts. Wherever possible, allocations were replaced by single buffers and preallocated scratch spaces:

- Pre-tokenization splits: Regex splitting typically produces hundreds or thousands of separate substring allocations. Instead, we keep the entire input in a single heap-allocated buffer and represent each split as a Rust Range<usize> (two machine words) into that buffer. For an input that produces 1,000 splits, this is 1 allocation instead of 1,000 - eliminating thousands of malloc/free round-trips and also keeping the data contiguous in cache.

- Byte-level encoding: GPT-style tokenizers require a byte-to-Unicode mapping step. Rather than calling char::encode_utf8 per byte at runtime, we pre-compute a 256-entry lookup table of 2-byte UTF-8 encodings at compile time. The inner loop writes 2 bytes unconditionally via a single copy and advances by the actual length (1 or 2), making it branchless. When the model uses byte-level pre-tokenization, a fused code path skips the Unicode round-trip entirely and merges directly from raw bytes using a pre-computed 256×256 byte-pair table for O(1) initial merge lookups.

- BPE merge heap: BPE continuously merges adjacent tokens to create the optimal final token ids. We do this using a priority queue, which is backed by a thread-local MergeScratch buffer that is reused across tokenizations, thus removing the need for a reallocation each time. Heap entries are packed into 16 bytes - a single u64 key encoding both the merge rank and position, and a u64 value encoding both token IDs - enabling comparison via a single integer comparison.

Regex

PCRE2 (a high-performance regex library with JIT compilation) is preferred when the pattern is supported, falling back to fancy-regex otherwise. One copy of the compiled regex is pre-allocated per thread at initialization, each with an independent DFA cache. This eliminates the lock contention that would occur if threads shared a single compiled regex, and allows the per-thread DFA caches to stay warm across calls.

For tokenizers that do not require advanced features like lookaheads, which force the use of fancy-regex, the PCRE2 JIT path is substantially faster. The pattern is compiled to native machine code once and reused for every subsequent match.

Experiments

Baselines & setup

To the best of our knowledge, the vast majority of models that rely on BPE tokenization implement their tokenizer through the HuggingFace AutoTokenizer library [2]. For this reason, we use it as our primary baseline, since it is widely adopted across the community and represents the default tokenizer used in many real-world deployments.

Our experiments focus on two types of evaluations. The first evaluates tokenization speed in isolation through microbenchmarks. In these experiments we compare the time required to tokenize the same inputs across different batch sizes using batch encoding. The second evaluation consists of end-to-end benchmarks in which we measure the time to first token (TTFT) of a query. For this purpose we use NVIDIA Dynamo with SGLang as the inference backend. The goal of the end-to-end evaluation is to verify that the tokenization optimizations translate into measurable improvements in downstream inference workloads, which is the primary motivation for this work.

In our microbenchmarks, we compare four different models across three different systems: NVIDIA HGX H100, HGX B200, and NVIDIA GB200 NVL72 systems. Each platform uses a different CPU, which can impact tokenization performance. The HGX H100 system uses an Intel Xeon 8468V, the HGX B200 system uses an Intel Xeon 8568Y, and the GB200 NVL72 system uses NVIDIA Grace CPU. Our goal is to ensure that the tokenization optimizations remain robust across the different CPU architectures that are commonly used in modern inference and training infrastructure.

For the end-to-end benchmarks, we currently report results on GB200 NVL72, which is a rack-scale design combining 72 Blackwell GPUs and 36 Grace CPUs and HGX B200. We plan to extend these experiments and share additional results across the other hardware platforms in future updates.

Tokenization speed comparison (microbenchmark)

In these experiments we measure the pure tokenization time, excluding any server or framework overhead. We compare the stock HuggingFace AutoTokenizer using the fast implementation with our optimized tokenizer, fastokens.

To strengthen the validity of our evaluation, we use two commonly used benchmark datasets. The first is ShareGPT [3], a collection of chat conversations gathered by the open source community in 2023. This dataset contains text from multiple languages and domains, including coding tasks, general conversations, and other instruction-style interactions which introduces a variety of use cases. The second dataset is LongBench-v2 [4], which consists of long English documents designed to evaluate long-context workloads.

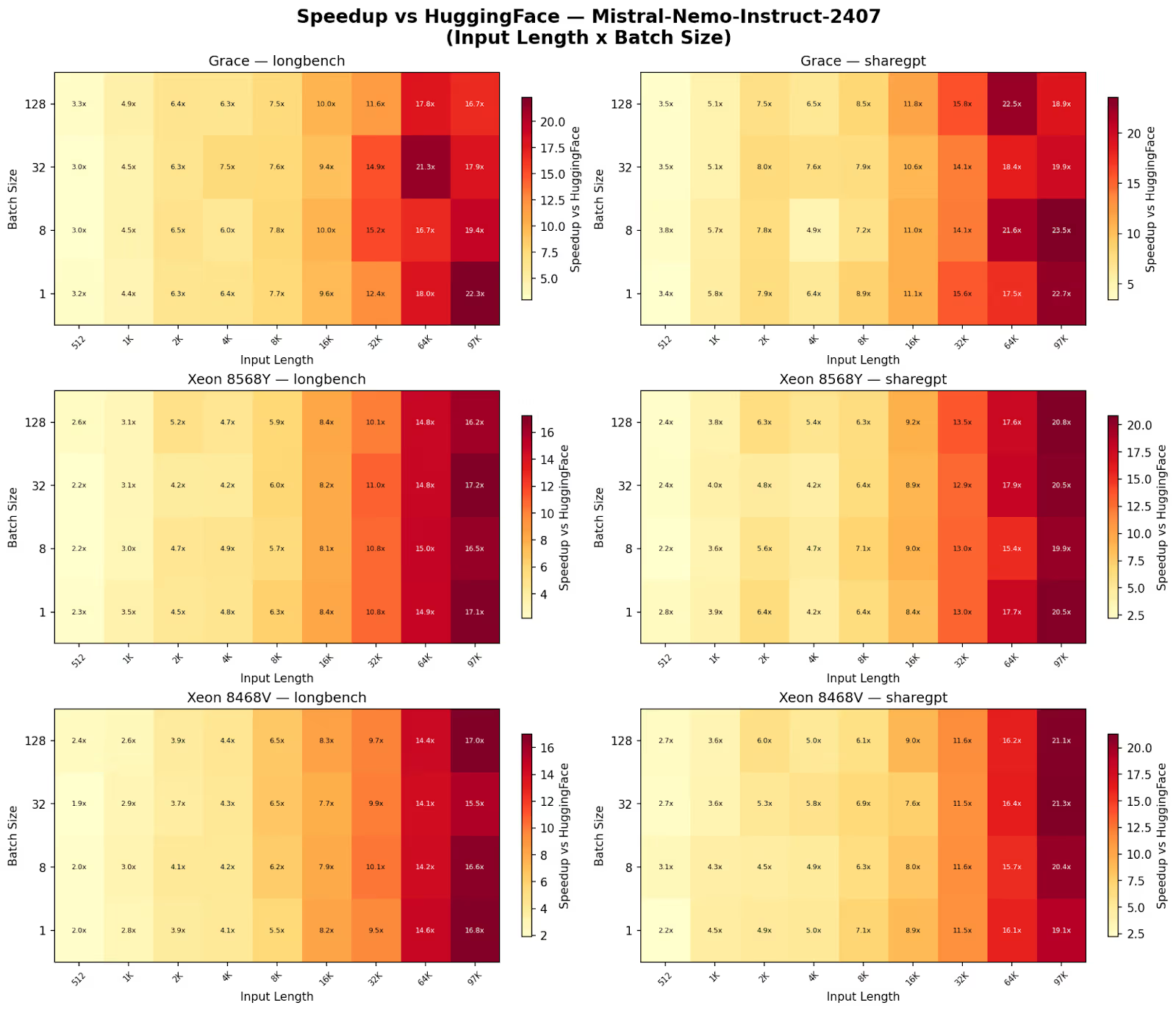

As mentioned earlier, we evaluate four different models, each using a different tokenizer. The models include DeepSeek V3.2, GPT-OSS 120B, MiniMax M2.1, and Mistral-Nemo-Instruct-2407.

Key findings

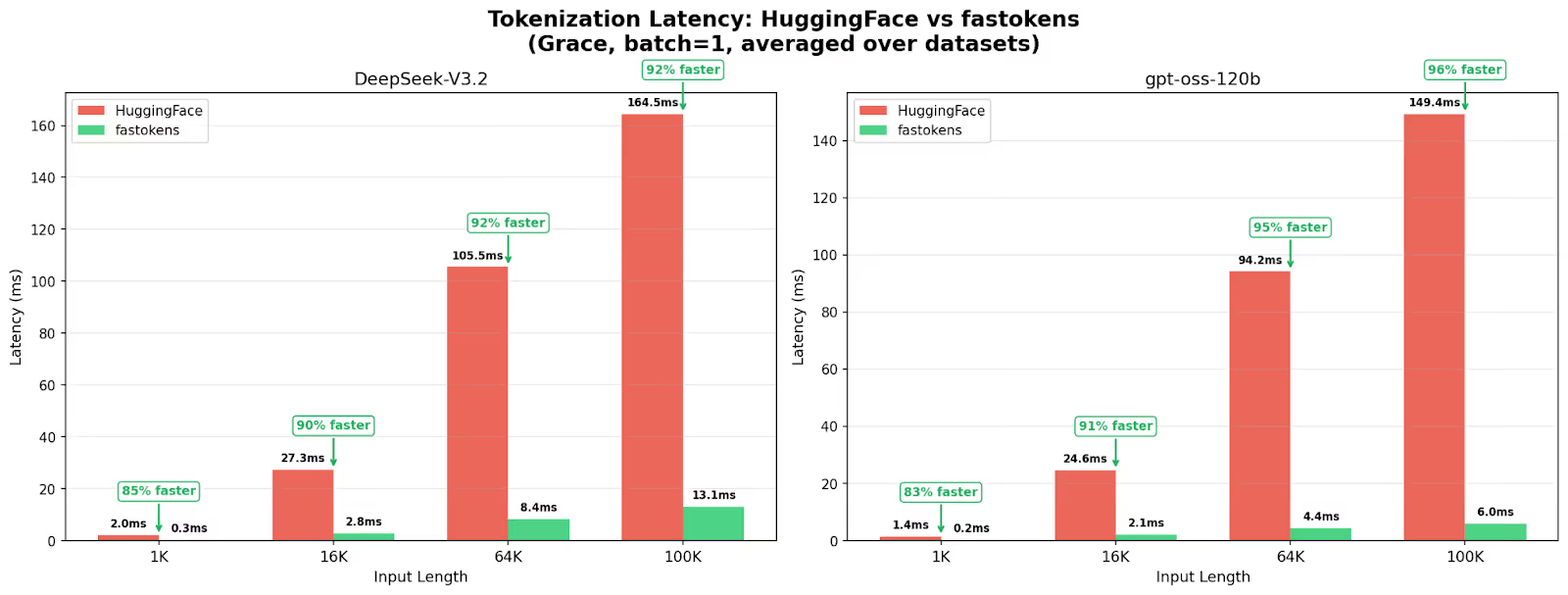

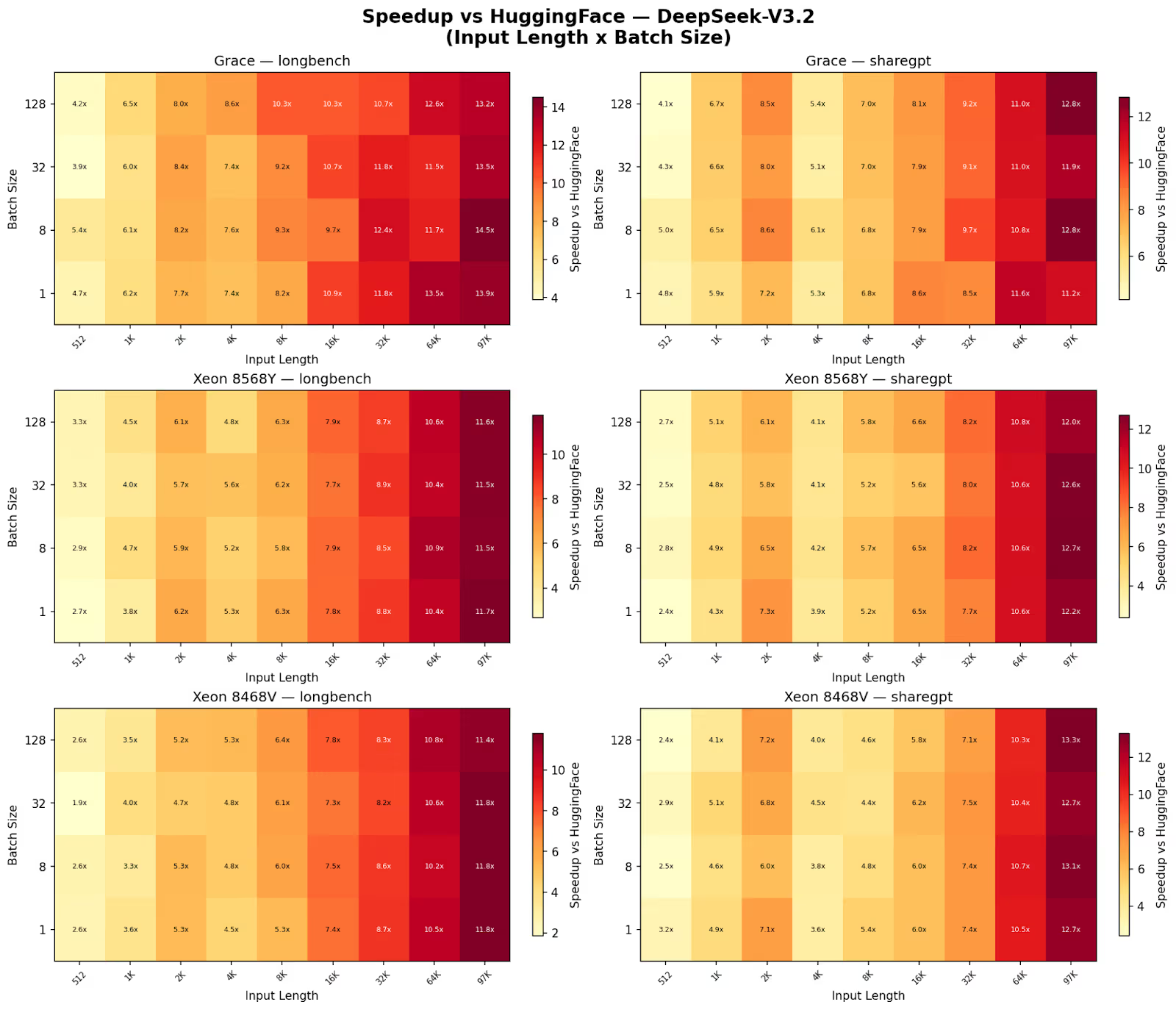

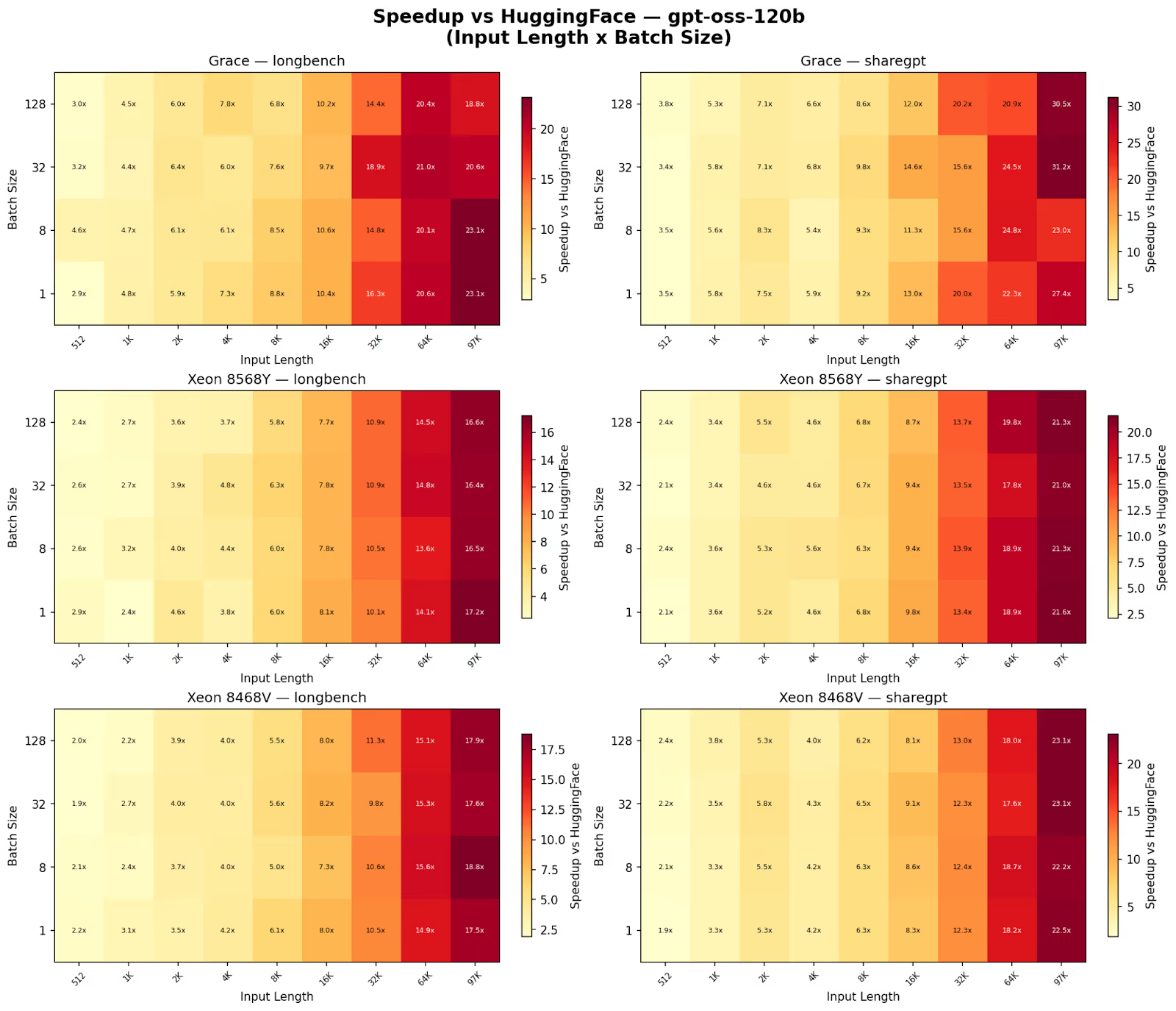

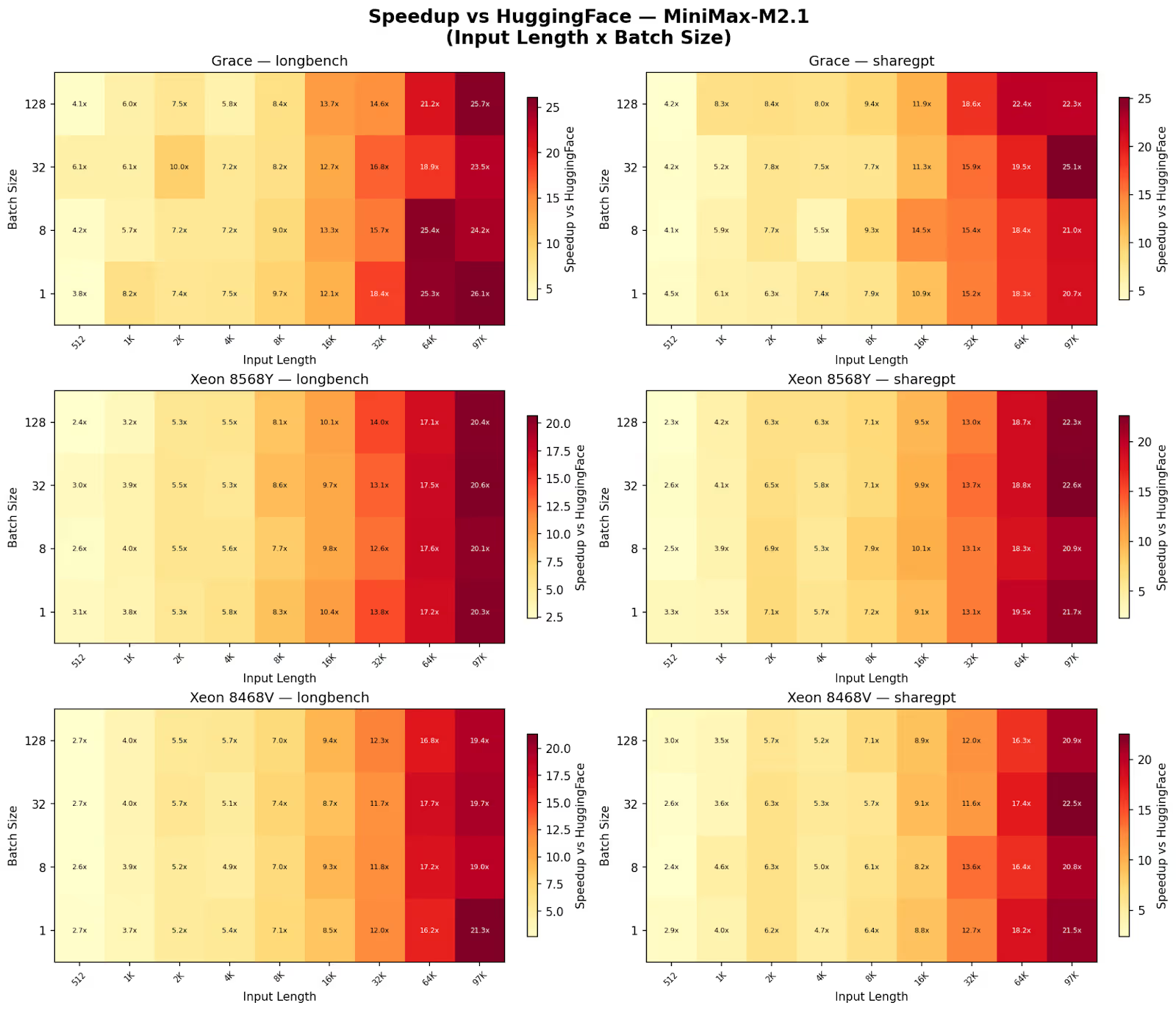

Consistent throughput advantage. fastokens achieves higher throughput than the HuggingFace baseline across all tested models, processors, datasets, batch sizes, and input lengths, with no configuration exhibiting a regression. We see superlinear scaling with sequence length as speedup grows from 2-4x at 512 sequence length to 22x on average at 100k tokens. In absolute terms, on the Grace CPU at batch=1, HuggingFace baseline latency reaches 149-165ms at 100K tokens while fastokens completes in 6-13ms, a reduction of over 92%. At 64K tokens, baseline latency is 94-106ms versus 4-8ms for fastokens. Even at 16K tokens, the gap is already significant: 25-27ms baseline versus 2-3ms for fastokens.

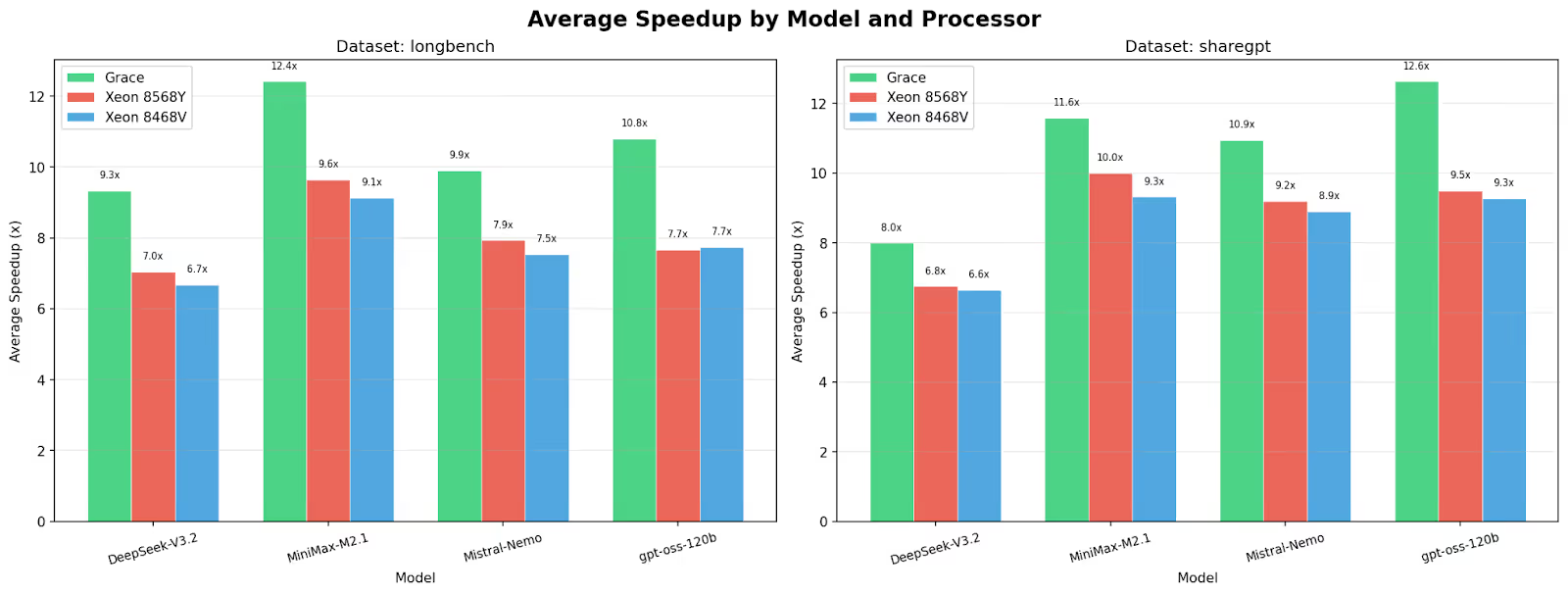

Processors show different speedups. Grace CPUs in the GB200 NVL72 system consistently yield the highest speedups (9.3–12.6× average), followed by Xeon 8568Y (6.8–10.0×) and Xeon 8468V (6.6–9.3×). We note that alternative tokenization implementations and parallelization strategies may yield different performance characteristics on x86 and other CPUs.

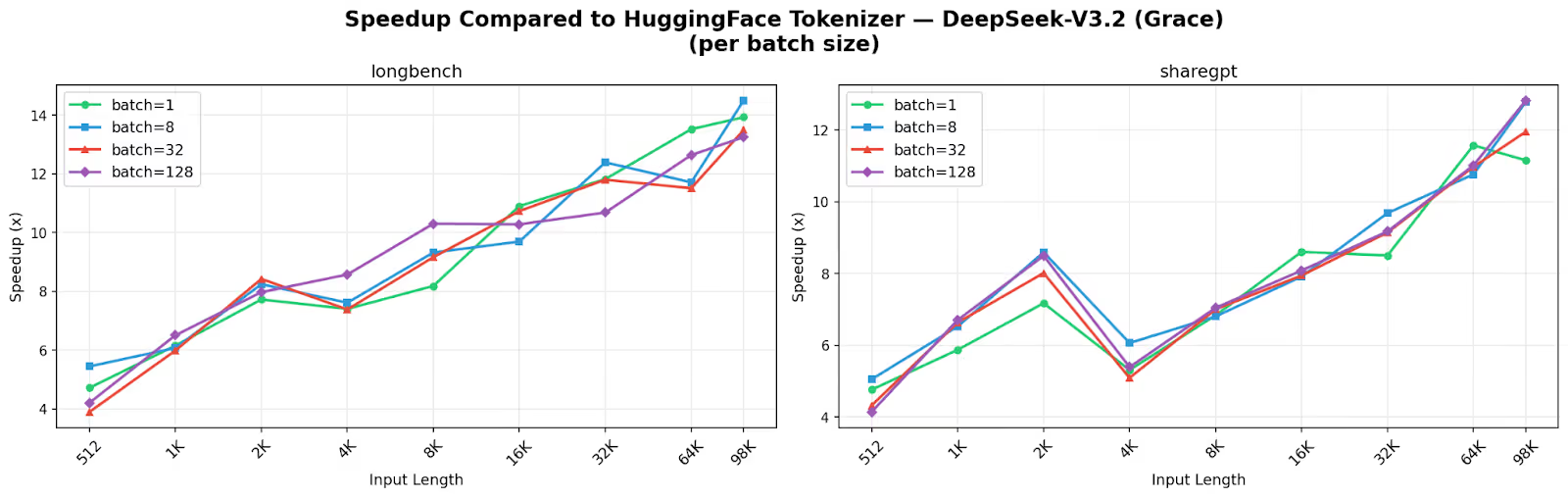

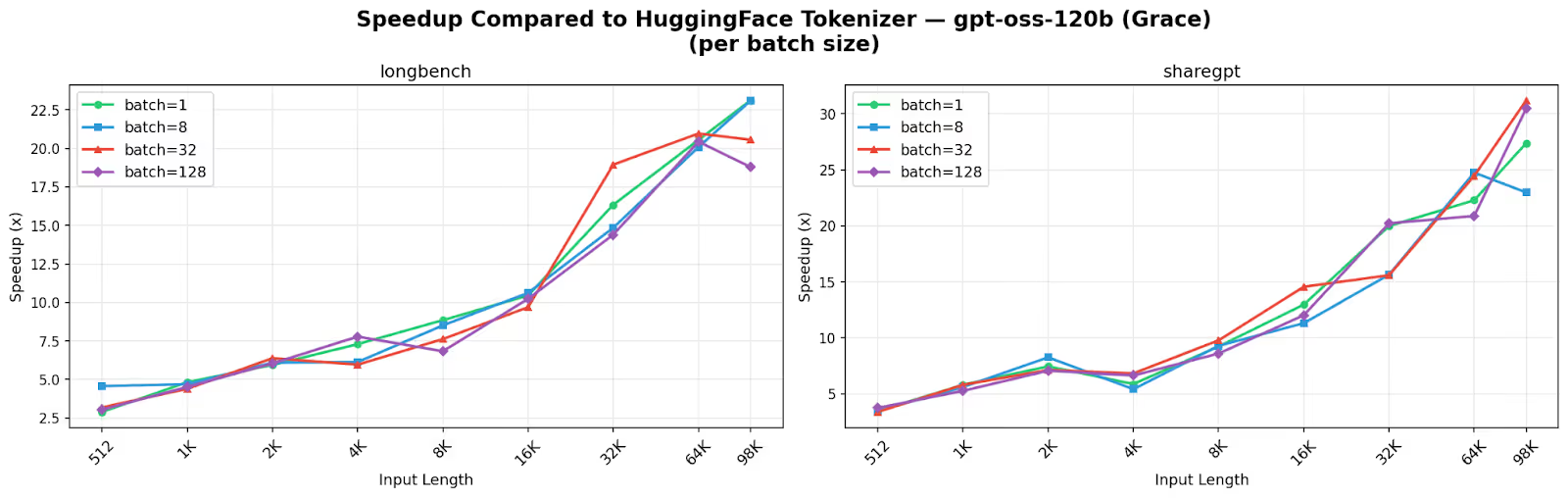

Robustness to batch size variation. Speedup remains largely invariant to batch size at a given input length, with variance dominated by the sequence length dimension. For example, on Grace CPU at 65K input length on longbench, DeepSeek-V3.2 achieves 13.5x, 11.7x, 11.5x, and 12.6x speedup at batch sizes 1, 8, 32, and 128 respectively, while gpt-oss-120b achieves 20.6x, 20.1x, 21.0x, and 20.4x at the same batch sizes. By contrast, across input lengths at a fixed batch size, speedup ranges from 4.7x to 13.9x for DeepSeek-V3.2 and from 2.9x to 23.1x for gpt-oss-120b. Further details, including per-batch-size results for MiniMax-M2.1 and Mistral-Nemo as well as breakdowns across all three hardware platforms, are provided in Appendix A.

End-to-end benchmark on Dynamo with SGLang

As mentioned earlier, we also aim to demonstrate that our optimizations have a direct impact on user experience in real inference workloads. The primary metric affected by tokenization is time to first token (TTFT). For a given user query, the total time until the first generated token is returned includes the tokenization stage. Since many deployments operate under strict TTFT service level agreements, reducing even a few milliseconds can be important.

To evaluate the impact under realistic conditions, we analyzed statistics from real agentic workload traffic. We observed that KV cache hit rates often reach around 90 percent, while prompt sizes commonly exceed 32k tokens and can reach up to 128k tokens. For such workloads, we deploy Prefill–Decode disaggregation to meet strict TTFT requirements. Accordingly, we model the prefiller stage using an output sequence length of 1 and a batch size of 1, as these latency constraints generally prevent batching multiple long prompts on a single prefiller.

Based on these observations, we collaborated closely with the NVIDIA Dynamo team to integrate fastokens into the Dynamo frontend and supported evaluations of the system through Dynamo.

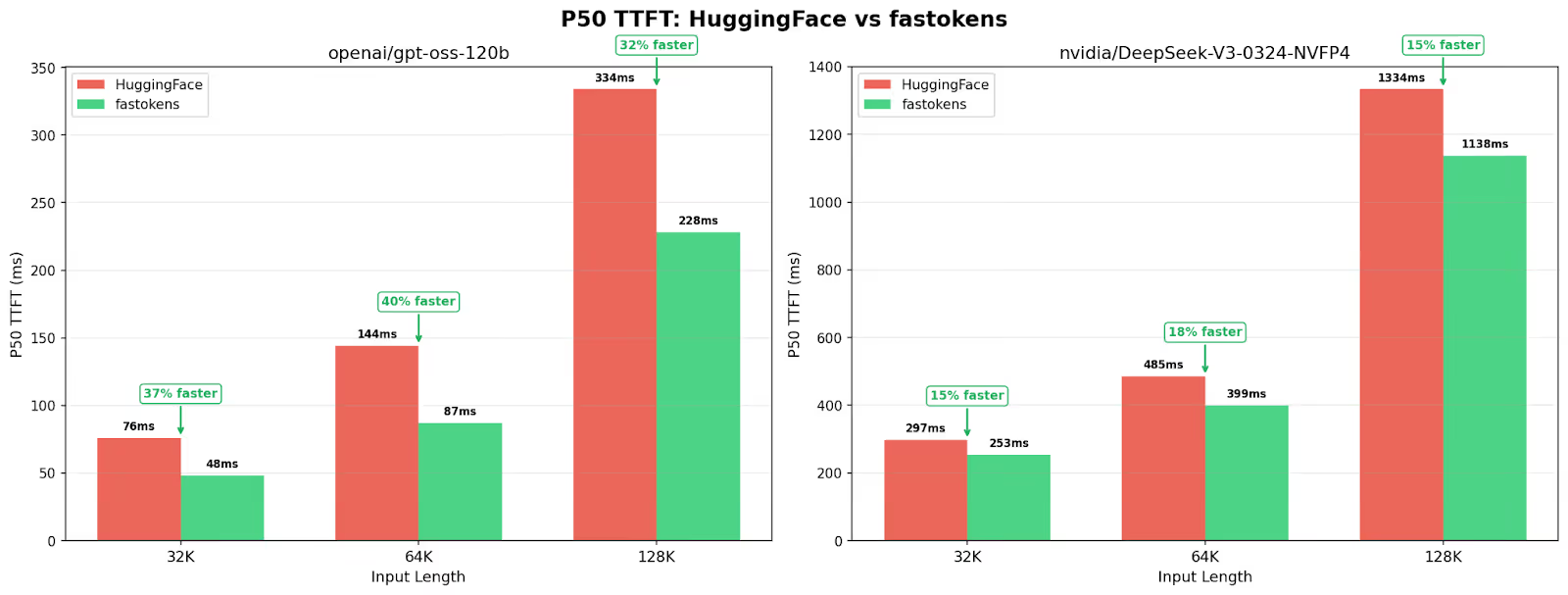

We ran end-to-end experiments using two models, DeepSeek V3 and GPT-OSS-120B, over a simulated workload. The experiments were conducted on HGX B200 and GB200 NVL72 systems using NVIDIA Dynamo with SGLang as the inference backend.

The results reflect the contribution of tokenization to the overall TTFT. For GPT-OSS-120B we observe an improvement of up to 40% in TTFT, while for DeepSeek V3 the improvement reaches up to 18%. This difference can be explained through Amdahl’s Law [5]. Tokenization represents only one component of the overall TTFT pipeline. While tokenization scales roughly linearly with input length and is largely agnostic to model size, the prefill stage depends on model size and compute characteristics and often dominates latency. As a result, when prefill accounts for a larger share of the total latency, the maximum achievable end-to-end improvement from accelerating tokenization becomes smaller.

Open source & drop-in integration

We release fastokens as an open-source project under the Apache 2.0 license that can be installed with uv pip install. It integrates with HuggingFace transformers with a single call and requires no downstream code modifications. fastokens is currently integrated with NVIDIA Dynamo, and can be used by setting the flag --tokenizer to fastokens.

# Use fastokens directly

from fastokens._native import Tokenizer

tokenizer = Tokenizer.from_model("deepseek-ai/DeepSeek-V3.2")

tokens = tokenizer.encode("A very long prompt that is now lightning fast.")

# Minimal integration with HuggingFace transformers

import fastokens

# This call globally patches AutoTokenizer

fastokens.patch_transformers()

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("deepseek-ai/DeepSeek-V3.2")

tokens = tokenizer.encode("A very long prompt that is now lightning fast.")Conclusion & future work

fastokens provides a substantial speedup for tokenization while requiring no changes to existing integrations. In long-context workloads, faster tokenization can reduce time-to-first-token (TTFT) and improve serving throughput, which in turn may positively impact both user experience and operational efficiency.

Current Limitations: Several existing models are currently unsupported due to unique components in their tokenization pipeline; optimizing these paths is a natural next step and part of our planned future work.

We welcome community contributions and requests for additional model support at: https://github.com/Atero-ai/fastokens

Acknowledgments

We thank the entire NVIDIA Dynamo team for their valuable feedback and support. We especially thank Biswa Ranjan Panda for helping integrate fastokens into Dynamo and for his rigorous testing throughout the process, and Itay Neeman for his valuable feedback and suggestions.

Appendix

Appendix A: Speedup comparisons across hardware, datasets, and batch sizes

In this section, we provide a detailed visual analysis of fastokens performance across models, processors, and input lengths.

References

[1] GitHub - ai-dynamo/dynamo: A Datacenter Scale Distributed Inference Serving Framework

[2] GitHub - huggingface/tokenizers: 💥 Fast State-of-the-Art Tokenizers optimized for Research and Production

[3] RyokoAI/ShareGPT52K · Datasets at Hugging Face

[4] LongBench v2

%201.avif)

.jpg)